HP Servers and Persistent Memory

Advances in hardware and software have converged to allow storing your entire database in memory (depending on how large it is), even if you don’t use Microsoft’s In-Memory OLTP feature.

HP Gen9 servers support NVDIMM-N (known as Persistent Memory), which at that time had a maximum size of 8GB, and with 16 slots, offered a total server capacity of 128GB. Hardly large enough to run today’s mega-sized databases, and also there was no way to actually store your database there. So the use case for SQL Server 2016 was to store log blocks for transaction logs there. This could be beneficial in general, but particularly when using durable memory-optimized tables. That’s because WRITELOG waits for the transaction log could be a scalability bottleneck, which reduced the benefit of migrating to In-Memory OLTP.

There were other potential issues when using Persistent Memory, detailed in this blog post. But what’s not covered in that post is the fact that deploying NVIDMM-N reduced the memory speed and/or capacity, because they are not compatible with LRDIMM. This causes you to use RDIMM, which reduces capacity, and because NVDIMM-N operates at a slower speed than RDIMM, it also affects total memory speed.

HP has since released Gen10 servers, and they have changed the landscape for those seeking reduced latency by storing larger data sets in memory. For one thing, they raise the bar for what’s now referred to as Scalable Persistent Memory, with a total server capacity of 1TB. To be clear, NVDIMM-N is not used in this configuration. Instead, regular DIMMs are used, and they are persisted to flash via a power source (this was also the case for NVDIMM-N, but both the flash, DIMM, and power source were located on the NVDIMM-N).

In this video, Bob Ward demonstrates ~5x performance increase for the industry’s first “disklesss” database, using a HPE Gen10 server, SUSE Linux, Scalable Persistent Memory, and columnstore (presumably on a “traditional/formerly on-disk table”, not a memory-optimized table, although that’s not specifically detailed in the video).

Brett Gibbs, Persistent Memory Category Manager for HP servers, states in this video that even databases that use In-Memory OLTP can benefit from Scalable Persistent Memory, because the time required to restart the database can be significantly reduced. He stated that a 200GB memory-optimized database that took 20 minutes to restart on SAS drives, took 45 seconds using Persistent Scalable Memory. However, no details are provided about the circumstances under which those results are obtained.

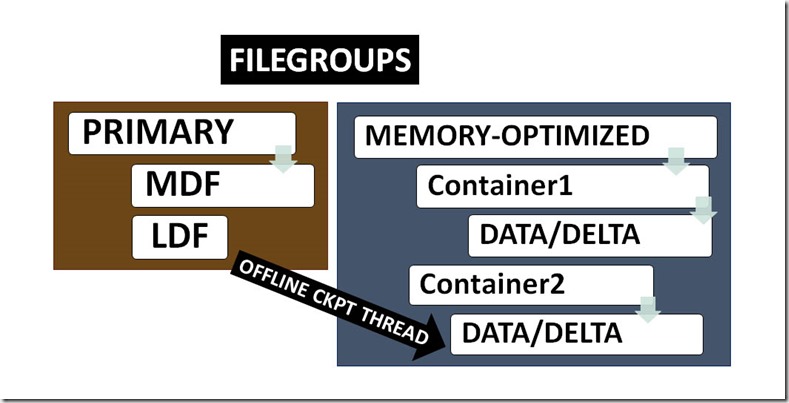

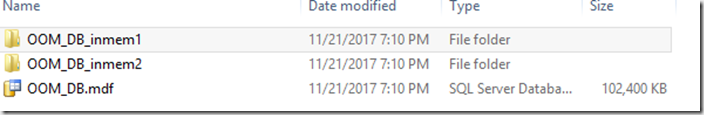

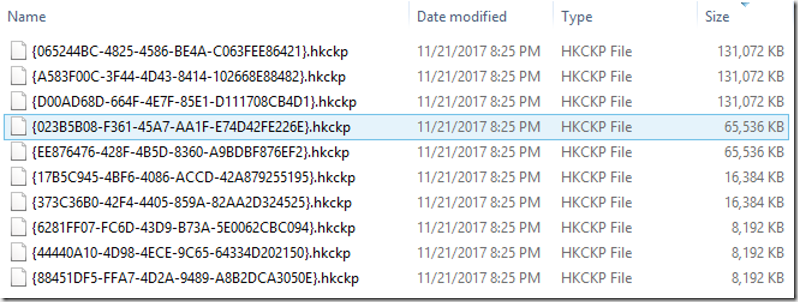

We are left to guess about the number of containers used, and the IOPS available from storage. It may be that in both cases, they tested using a single container, which would be a worst practice. And if that’s correct, to reduce database restart time all you had to do was spread the containers across more volumes, to “parallelize” the streaming from storage to memory.

I’m assuming that the 45 seconds specified represents the amount of time required to get durable memory-optimized data from flash storage back into memory. If that’s correct, then the reduction of time required to restart the database has nothing to do with the Scalable Persistent Memory (other than memory speed), and everything to do with how fast flash storage can read data.

Licensing

The HP video also details how there might be a licensing benefit. It’s stated that if your workload requires 32 cores to perform well, and you reduce latency through the use of Scalable Persistent Memory, then you might be able to handle the same workload with less cores. I’d love to see independent test results of this.

In-Memory OLTP

If you are considering placing a database entirely in memory, and don’t want to be tied to a specific hardware vendor’s solution, In-Memory OLTP might be an option to consider.

This is an extremely vast topic that I’ve been interested in for quite a while, and I’ll summarize some of the potential benefits:

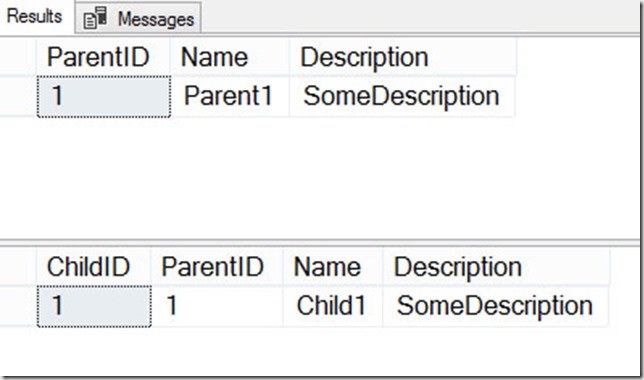

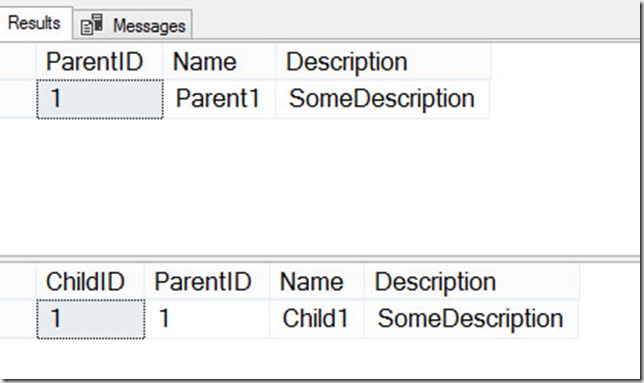

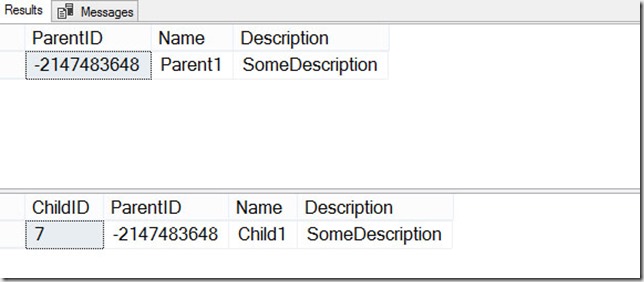

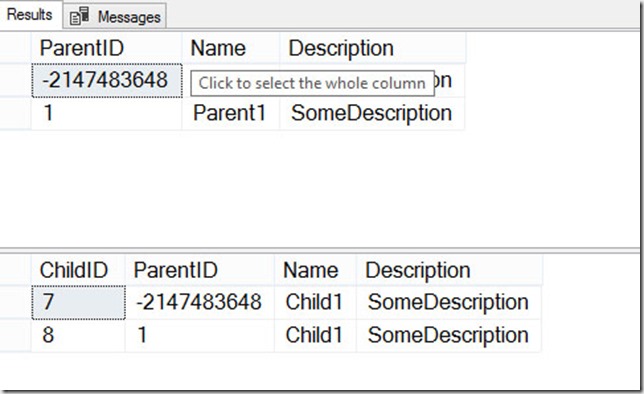

- Maintaining referential integrity – Microsoft recommends keeping cold data in on-disk tables, and hot data in memory-optimized tables. But there’s just one problem with that: FOREIGN KEY constraints are not supported between on-disk and memory-optimized tables. Migrating all data to memory-optimized tables solves this specific issue.

- Native compilation – if you want to use native compilation, it can only be used against memory-optimized tables. If you can deal with the potential TSQL surface area restrictions, migrating all data to memory-optimized tables might allow greater use of native compilation.

- Single table structure – if you were to keep cold data on disk, and hot data in-memory, you would need to use two different table names, and perhaps reference them through a view. Migrating all data to memory-optimized tables solves this problem.

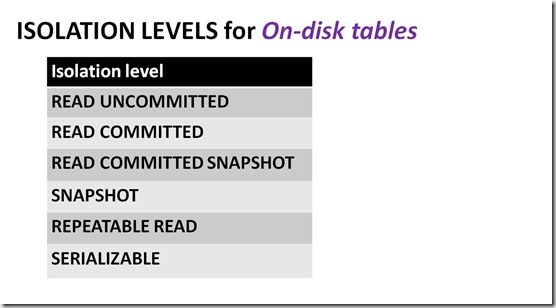

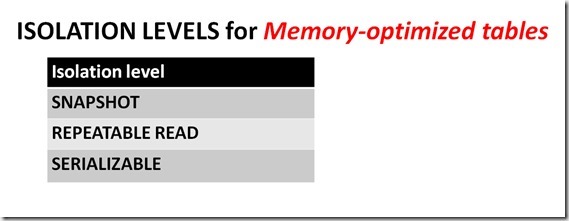

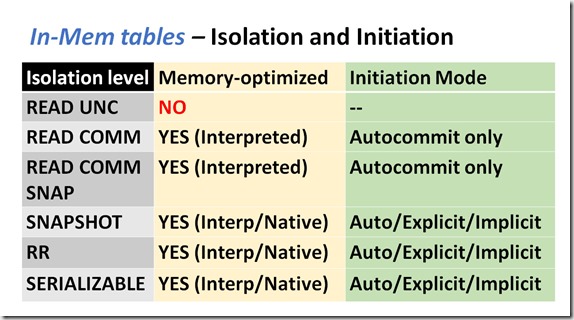

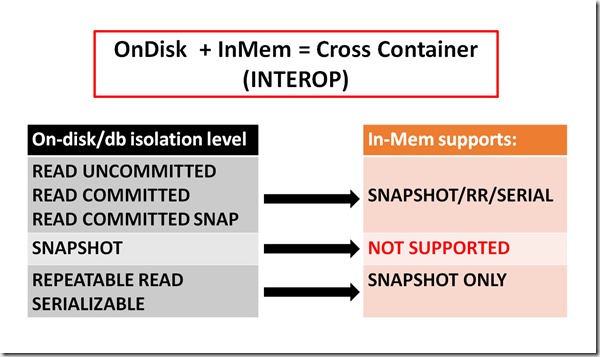

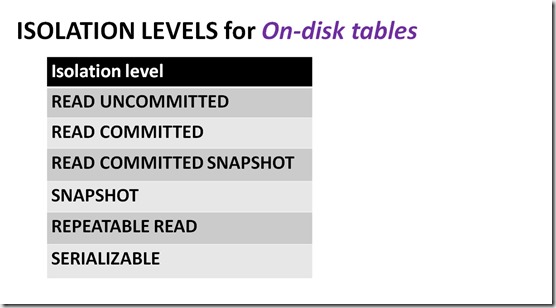

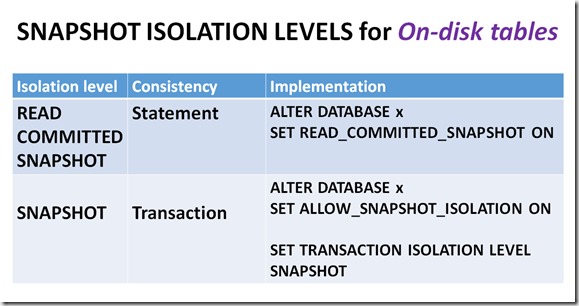

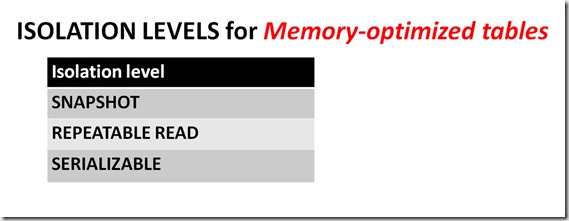

- Unsupported isolation levels for cross-container transactions – it’s possible to reference both on-disk and memory-optimized tables in a single query, but memory-optimized tables only support a subset of the isolations that are available for on-disk tables, and some combinations are not supported (SNAPSHOT, for example).

- Near-zero index maintenance – other than potentially reconfiguring the bucket count for HASH indexes, HASH and RANGE indexes don’t require any type of index maintenance. FILLFACTOR and fragmentation don’t exist for any of the indexes that are supported for memory-optimized tables.

- Very large memory-optimized database size – Windows Server 2016 supports 24TB of memory, and most of that could be assigned to In-Memory OLTP, if you are using Enterprise Edition. This is way beyond the capacity supported by the current line of HP servers using Scalable Persistent Memory.

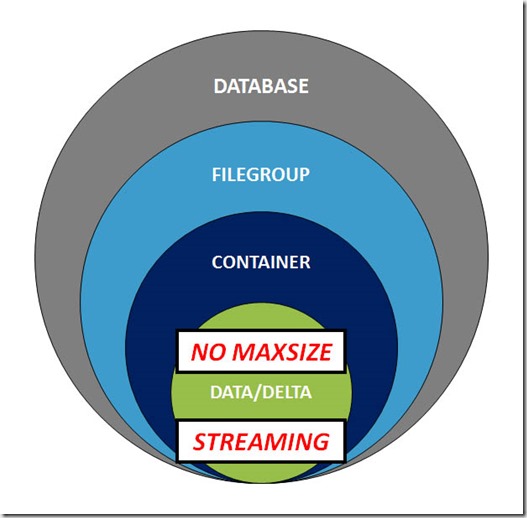

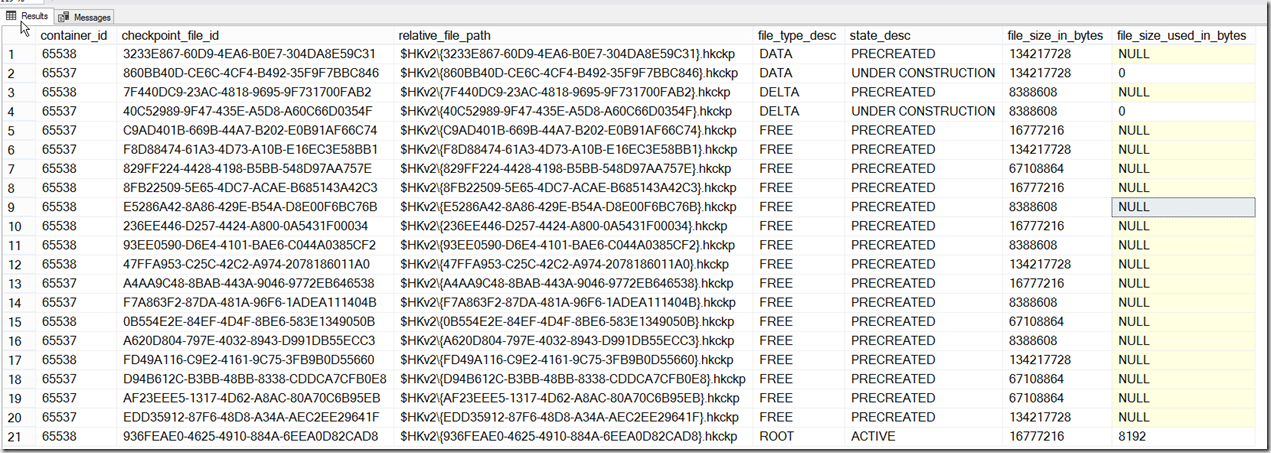

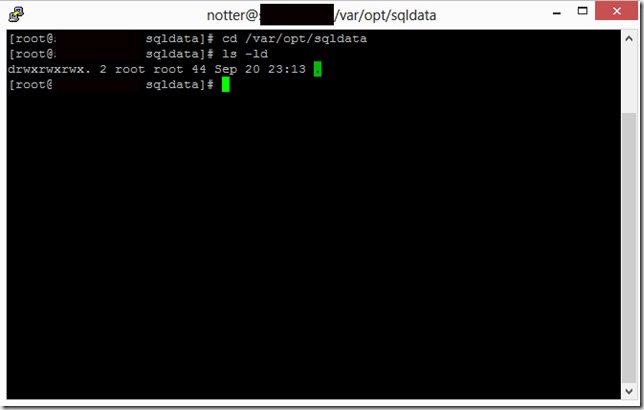

One extremely crucial point to make is that if you decide to migrate an entire database to In-Memory OLTP, then database recovery time must be rigorously tested. You will need to have enough containers spread across enough volumes to meet your RTO SLA.