SQL Server 2017 includes enhancements to many features, and some of those enhancements include In-Memory OLTP.

- Microsoft states that ALTER TABLE for memory-optimized tables is now “usually substantially faster”. I asked for clarity about that – if it means that ALTER TABLE is faster for the same events that were able to be executed in parallel and minimally logged in SQL 2016, or if there are new ALTER TABLE statements which now execute in parallel. They replied that there is no change to the set of operations that executed in parallel. So the ALTER TABLE commands that executed fast now (theoretically) execute faster.

- Up to and including SQL 2016, the maximum number of nonclustered indexes on a memory-optimized table was eight, but that limitation has been removed for SQL 2017. I’ve tested this with almost 300 indexes, and it worked. With this many supported indexes, it’s no wonder they had to….

- Enhance the index rebuild performance for nonclustered indexes during database recovery. I confirmed with Microsoft that the database does not have be in SQL 2017 compatibility mode (140) to benefit from the index rebuild enhancement. This type of rebuild happens not only for database restore and failover, but also for other “recovery events” – see my blog post here.

- In SQL 2017, memory-optimized tables now support JSON in native modules (functions, procedures and check constraints).

- Computed columns, and indexes on computed columns are now supported

- TSQL enhancements for natively compiled modules include CASE, CROSS APPLY, and TOP (N) WITH TIES

- Transaction log redo of memory-optimized tables is now done in parallel. This has been the case for on-disk tables since SQL 2016, so it’s great that this potential bottleneck for REDO has been removed.

- Memory-optimized filegroup files can now be stored on Azure Storage, and you can also backup and restore memory-optimized files on Azure Storage.

- sp_spaceused is now supported for memory-optimized tables

- And last but definitely not least, drum roll, please…….we can now rename memory-optimized tables and natively compiled modules

While Microsoft continues to improve columnstore indexes for on-disk tables, unfortunately columnstore for memory-optimized tables gets left further and further behind. Case in point would be support for LOB columns for on-disk tables in SQL 2017, but no such support for memory-optimized tables. And my good friend Niko Neugebauer (b|t) just reminded me that computed columns for on-disk CCI are supported in SQL 2017, but they are not supported for in-memory CCI. For an in-depth comparison of columnstore differences between on-disk and memory-optimized tables, see my post here.

In addition to what’s listed above, I tested the following functionality for natively compiled stored procedures:

STRING_AGG()

This works, but you can’t use character functions, such as CHAR(13):

CREATE PROCEDURE dbo.Proc_VehicleRegistration

WITH NATIVE_COMPILATION, SCHEMABINDING AS

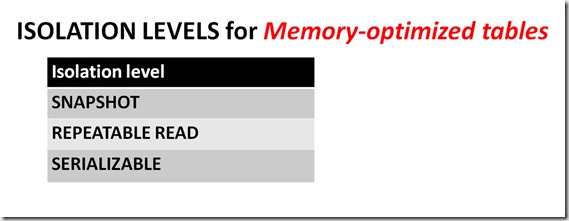

BEGIN ATOMIC WITH (TRANSACTION ISOLATION LEVEL = SNAPSHOT, LANGUAGE = N'English')

SELECT

STRING_AGG(VehicleRegistration, CHAR(13)) AS csv – fails

FROM Warehouse.VehicleTemperatures

WHERE Warehouse.VehicleTemperatures.VehicleTemperatureID BETWEEN 65190 AND 65200

END;

GO

CONCAT_WS()

CREATE OR ALTER PROCEDURE dbo.Proc_VehicleTemperatures

WITH NATIVE_COMPILATION, SCHEMABINDING AS

BEGIN ATOMIC WITH (TRANSACTION ISOLATION LEVEL = SNAPSHOT, LANGUAGE = N'English')

SELECT CONCAT_WS( ' - ', VehicleTemperatureID, VehicleRegistration) AS DatabaseInfo

FROM Warehouse.VehicleTemperatures

END;

GO

EXEC dbo.Proc_VehicleTemperatures

GO

TRIM()

CREATE OR ALTER PROCEDURE dbo.Proc_TrimTest

WITH NATIVE_COMPILATION, SCHEMABINDING AS

BEGIN ATOMIC WITH (TRANSACTION ISOLATION LEVEL = SNAPSHOT, LANGUAGE = N'English')

SELECT TRIM(VehicleRegistration) AS col1

FROM Warehouse.VehicleTemperatures

END;

GO

EXEC dbo.Proc_TrimTest

GO

TRANSLATE()

CREATE OR ALTER PROCEDURE dbo.Proc_TranslateTest

WITH NATIVE_COMPILATION, SCHEMABINDING AS

BEGIN ATOMIC WITH (TRANSACTION ISOLATION LEVEL = SNAPSHOT, LANGUAGE = N'English')

SELECT TRANSLATE('2*[3+4]/{7-2}', '[]{}', '()()');

END;

GO

EXEC dbo.Proc_TranslateTest

GO

sys.dm_db_stats_histogram()

CREATE STATISTICS stat_VehicleTemperatures ON Warehouse.VehicleTemperatures(VehicleRegistration)

SELECT s.object_id, OBJECT_NAME(s.object_id), hist.step_number, hist.range_high_key, hist.range_rows,

hist.equal_rows, hist.distinct_range_rows, hist.average_range_rows

FROM sys.stats AS s

CROSS APPLY sys.dm_db_stats_histogram(s.[object_id], s.stats_id) AS hist

WHERE OBJECT_NAME(s.object_id) = 'VehicleTemperatures'

My wish list for the In-Memory OLTP feature is huge, but it’s great to see Microsoft continually improve and extend it.